How Science Is Broken Pt. 1

Getting into the weeds on mask studies.

In the wake of my last post, friends of mine sent me three scientific studies to contradict my stance that masks don’t work on the general public.

Let me state up front: I have many good people in my life, these gentlemen especially included, who are very sincere about their trust in masks, and I truly don’t want to disparage them for it. This is a complicated topic — I wrote four distinct pieces this summer looking at it from different angles (available epidemiology, droplets vs. aerosols, viral interference, and historical or evolutionary perspectives).

But I decided to go through each of these studies as granularly as I could, to show why science today is probably broken, why you need to read stories about these studies with a critical eye, and how to spot a study that doesn’t say what the headlines say.

The first study sent to me was the recently-famous Bangladesh mask study by Yale economics professor Jason Abaluck et al. (It’s currently a working paper put out by the National Bureau of Economic Research.) This came out at the beginning of the month and was hailed as proof that masks are effective at stopping the spread.

One of the inspirations for this Substack, el gato malo, published two extremely detailed takedowns of this study here and here, but I’ll give the highlights here.

Part of the problem with mask studies in general is that you can’t do a true double-blinded randomized controlled trial. In a drug trial, you would divide the participants into two groups after making sure they’re appropriately matched, give half of them a placebo and half of them the study drug, and not tell either the participant or the deliverer which group each participant was in. For obvious reasons, you can’t do that with masks. But this is a really interesting problem when it comes to the people collecting the data in this instance, because all of the symptoms were self-reported by the participants.

A quick recap of how this study was conducted: they picked a bunch of remote villages in Bangladesh, had some of them wear masks and some of them not. But here’s a key thing to note: they couldn’t do precise testing to mark the start state of a village. If a village had already been through their COVID wave, the study authors would have had no way of knowing because the country is too poor to have enough lab capacity to test. (The study remarks that previous studies found “two orders of magnitude more infections than reported cases”, i.e. they only were able to document 1 case out of every 100.)

Given that this is going to have a huge effect on any infection spread later on, particularly with only 5 or 9 weeks of follow-up (or 12 weeks for serology testing) and the small number of cases they were screening for, their study is confounded before they even get out of the starting gate.

Then let’s look at what steps their interventions took: there was a mix of masks, education, signage, public signaling, and text messages. There was also an observed increase in physical distancing. Which of these made a difference? Which was the independent variable, and which was the dependent variable? We can never know, because the key to a study like this is a single independent variable. You keep as much constant as you can except for the thing you’re trying to study.

Now let’s look at figure 3 out of the report.

What this says is that there was a statistically significant decrease of blood-test-confirmed positive cases with symptoms associated with surgical mask use, but only if you were above 50. Again, because this was all based on self-reported symptoms, could this be because the older population was more likely to do what the study authors asked them to do? Could the lower population age be reluctant to say whether they’d had symptoms? Could a study participant, in the interest of not letting the authors down that their intervention have failed, simply lie on the survey? And what about asymptomatic spread, which you’d only be able to catch with an antibody test?

Let’s look at this paragraph out of the report:

In a telephone survey of respondents at the end of April 2020, over 80% self-reported wearing a mask and 97% self-reported owning a mask. The Bangladeshi government formally mandated mask use in late May 2020 and threatened to fine those who did not comply, although enforcement was weak to non-existent, especially in rural areas. Anecdotally, mask-wearing was substantially lower than indicated by our self-reported surveys. To investigate, we conducted surveillance studies throughout public areas in Bangladesh in two waves. The first wave of surveillance took place between May 21-25, 2020 in 1,441 places in 52 districts. About 51% out of more than 152,000 individuals we observed were wearing a mask. The second wave of surveillance was conducted between June 19-22, 2020 in the same 1,441 locations, and we found that mask-wearing dropped to 26%, with 20% wearing masks that covered their mouth and nose.

Key takeaway: study participants will lie to study authors. I knew this about food frequency questionnaires in nutritional epidemiology, and now I know it about viral epidemiology.

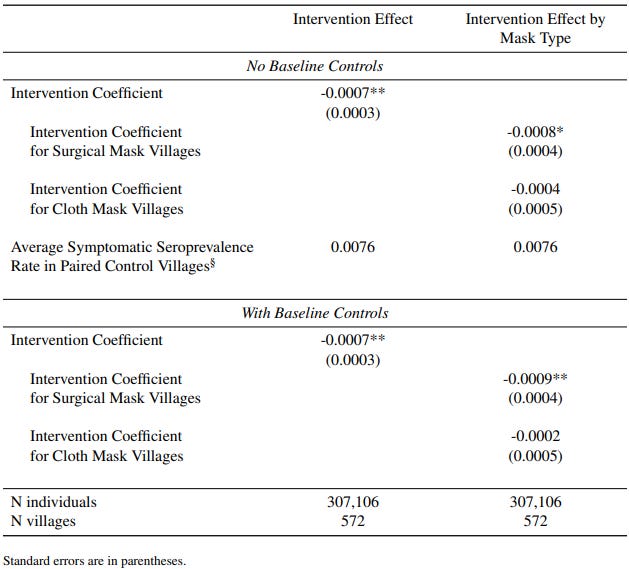

One last thing on the Bangladesh study: let’s look at this table from the appendix that includes the error bounds. Look at the intervention coefficient for cloth mask villages in both tables:

When the standard errors are greater than the calculated effect, it means that they weren’t able to determine whether there was an effect at all. There’s a chance that the intervention had the opposite effect they were trying to achieve. And yet, this study was hailed as “the most important single piece of epidemiological research of the entire pandemic”.

Okay.

“Publish or Perish” has been a phrase used in academia for about a century, though it’s only really gained a foothold as a widespread issue within the last thirty years or so.

According to this 2013 study in Journal of Research in Medical Sciences by Rawat and Meena,

The increase in the number of publications has led to the growth of many new journals. In 2006 alone, approximately 1.3 million peer reviewed scientific articles were published, aided by a large rise in the number of available scientific journals from 16,000 in 2001 to 23,750 by 2006.[3] The increasing scientific articles have fuelled the demand for new journal [sic]… The acceptance and appreciation of a publication is frequently gauged by citation index. Only 45% of the articles published in 4500 top scientific journals are cited within the first 5 years of publication, a figure which appears to be dropping steadily.[4] Only 42% of the papers receive more than one citation, 5-25% of these are self-citation by the authors or journals.[5] Majority of the publications still goes uncited.

In layman’s terms, if you have a job at a research university, you are expected to regularly publish studies and articles. The more studies you run and papers you publish, the more the university gets in grant money. According to this January 2011 paper from the American Association of Universities, “the federal government supports about 60 percent of the research performed at universities. In 2009, that amounted to the federal government supporting about $33 billion of universities’ total annual R&D spending of $55 billion”. So competition for these grants gets fierce, as the grant money becomes an important part of a university’s operation budget.

This leads to a related problem in research called data dredging, significance chasing, or p-hacking. This is a giant issue in epidemiology, where researchers will gather a massive amount of data, then slice it in a thousand different ways until they come up with a statistically significant correlation, as defined by a mathematical representation called the p value. But this type of correlation says nothing about cause and effect, only observation that two variables have a statistical relationship.

There’s another related problem in something called the replication crisis. There’s a growing issue where studies are conducted, peer reviewed, and published, but attempts to replicate the study have failed. This is one of the key tenets of science: if we study a thing, then study the thing again, does the same thing happen? According to this May 2021 paper in Science Advances by Serra-Garcia and Gneezy, “Nonreplicable publications are cited more than replicable ones”,

In psychology, only 39% of the experiments yielded significant findings in the replication study, compared to 97% of the original experiments. In economics, 61% of 18 studies replicated, and among Nature/Science publications, 62% of 21 studies did. In addition, the relative effect sizes of findings that did replicate were only 75% of the original ones. For failed replications, they were close to 0% [see also (8–10)].

But more importantly, “papers that replicate are cited 153 times less, on average, than papers that do not”. It’s another instance of “a lie makes it halfway around the world before the truth gets its boots on”.

Do a study, exaggerate its findings or otherwise fudge the data, then get a big press push off of it, even though no one can verify the results later. Lather, rinse, repeat.

Study Two was published in the American Journal of Tropical Medicine and Hygiene in August 2020 by Christopher Leffler, et al. and is called “Association of country-wide coronavirus mortality with demographics, testing, lockdowns, and public wearing of masks”. According to the abstract, “duration of mask-wearing by the public was negatively associated with mortality (all p<0.001)”. They performed an analysis of 200 countries with publicly-available mortality data on “age, sex, obesity prevalence, temperature, urbanization, smoking, duration of infection, lockdowns, viral testing, contact tracing policies, and public mask-wearing norms and policies”.

They normalized the countries aligning their estimated date of first infection or announced mask mandate or lockdown. That’s enough of a confounder right there, because countries that implemented mask policies before their first infection even appeared would give them a better appearance in the analysis, i.e. they’re preventing the spread of nothing.

What you would expect to see in this kind of study is a table showing “this country had masks and saw X deaths per 100,000, where that country did not have masks and saw Y deaths per 100,000”. This however is a multivariate analysis trying to break apart the impact of masks, lockdowns, international travel restrictions, obesity, and contact tracing on mortality. From the abstract,

In countries with cultural norms or government policies supporting public mask-wearing, per-capita coronavirus mortality increased on average by just 15.8% each week, as compared with 62.1% each week in remaining countries.

Again, it’s a multivariate analysis, so there’s at best an association between mask policies and deaths, but there’s a lot of other factors that could be confounding the results.

But more importantly, this was published in the summer immediately following the worldwide outbreak, on August 4, 2020. Let’s look at some of those cited countries in the months after the study was published. Such as Japan…

Or the Philippines…

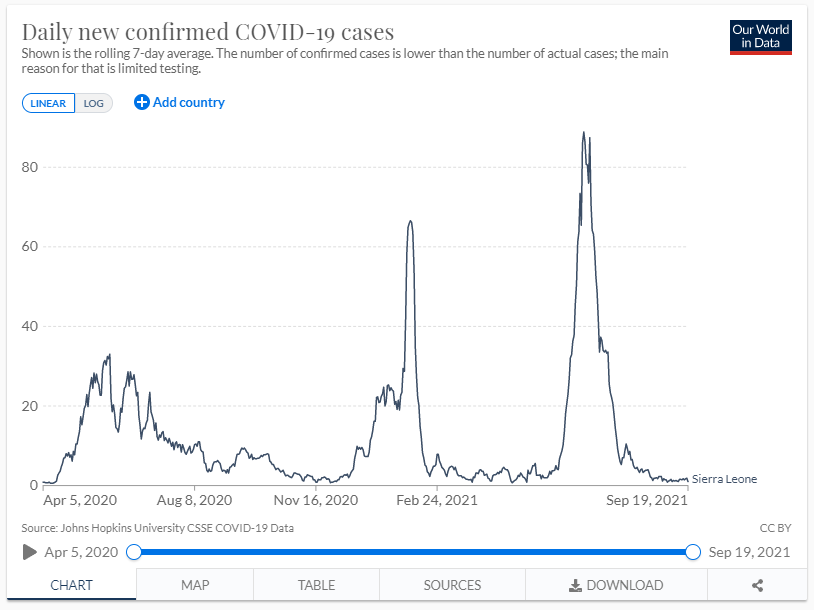

Or Sierra Leone…

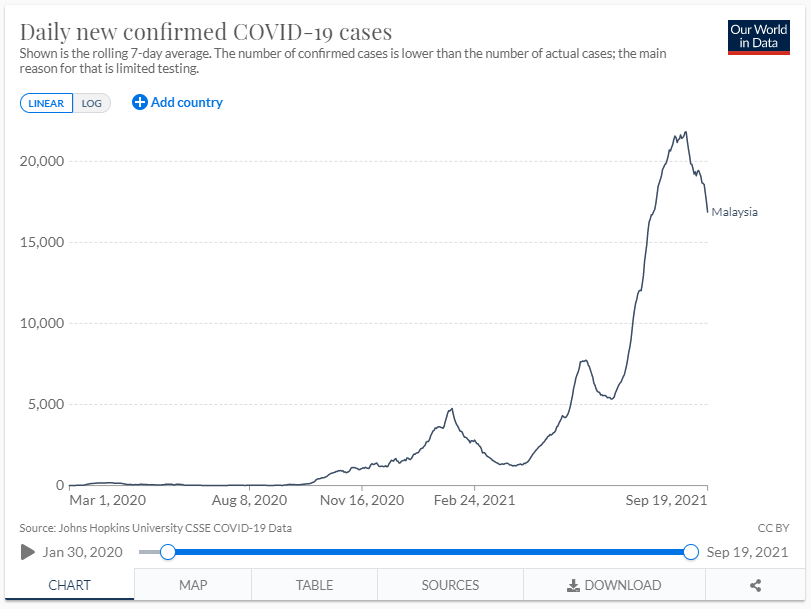

Or Malaysia…

Or Venezuela…

Or Taiwan…

Masks did a great job of preventing the spread, didn’t they?

At best, you might be able to say something like, “Mask mandates will work on a short-term basis, but as pandemics proceed fatigue will set in and mask usage will slip.” Except that Japan still had reported mask compliance via University of Maryland’s ongoing survey of between 95% and 98% since April of this year. And Taiwan had 95% compliance on May 13. And Malaysia had 93% compliance as of April 1, prior to their largest peak.

Beware: any studies purporting to show mask utility on a country-wide basis were subject to an expiration date when the study was published. And yet, this study will never be retracted or commented that any correlations were transitory.

This was a lot, and I still have one study left to break down and some more points to make about the State of Science. So more to come in Part 2.

You did a brilliant job on this essay. The charts said it all. I really like how you started the essay, recognizing the relationship you have with your 3 friends. I wonder how people who stand by the crucial importance of wearing mask would / will justify the charts of countries with high mask adherence. I wish masks worked. I think they don't because Covid spreads in aerosols... in the air and every time we breath in and out we may become infected... I think the gov't should be pushing the need to lose weight and protecting the elderly and people with pre-existing conditions... and vitamin D3.. We should have been more like Sweden... and living freely now as they are in Denmark.