(Part 1 is here.)

I’ve been aware of a journalism problem with respect to science for a long time. Most reports of a new study are glorified press releases, uncritically featuring quotes from the study team hailing its findings as ground-breaking. The findings will inevitably tie to an existing narrative that the media outlet is supporting.

This study from the BMJ shows:

40% of the press releases contained exaggerated advice, 33% contained exaggerated causal claims, and 36% contained exaggerated inference to humans from animal research. When press releases contained such exaggeration, 58%, 81%, and 86% of news stories, respectively, contained similar exaggeration, compared with exaggeration rates of 17%, 18%, and 10% in news when the press releases were not exaggerated… At the same time, there was little evidence that exaggeration in press releases increased the uptake of news.

(I’ve taken out some detail related to confidence intervals, but they’re statistical disclosures and don’t change the gist of the article.)

And the authors of this study in BMC Medicine intercepted press releases from universities before they were sent to the media. The study found that when the press releases were more carefully worded to remove exaggeration of the findings, the resulting articles were similarly careful, containing caveats of correlation vs. causation, but they didn’t get reported any less.

I thought journalists were supposed to be skeptical, to look for the angles that would change the story, to disbelieve what they’re told until it can be verified. I don’t think that’s the case any more, most egregiously in science reporting.

The third study sent to me was a review study published in Advances in Colloid and Interface Science in April 2021 by Ju, et al. and is called “Face masks against COVID-19: Standards, efficacy, testing and decontamination methods”. (This one is behind a pay wall, but my friend was gracious enough to send it along.) It’s a doozy, but I’ll break it down for you.

The study sets the stage by stating that the WHO’s recommendation against widespread mask using in April 2020 was based in part on a global effort to alleviate PPE shortages among healthcare workers — exactly the kind of noble lie I cited in a previous article. Then in July 2020, the CDC recommended public mask wearing, based to some undetermined degree on the case study (read: anecdote) of two hair salon workers in Springfield, MO who saw 139 clients while they were infected and masked and reportedly didn’t spread it. However, while they performed contact tracing of their clients and no one reported any symptoms, they were only able to get permission to test half of the clients. So let’s say at best it’s a low-quality study with some gaps in what we can derive from it, particularly with only 139 people. And yet this was to some extent the basis for the recommendation that everyone, symptomatic or not, wear masks.

The study breaks apart the mask data into three categories: quality standards and filtration mechanisms; qualitative determination of mask integrity and filtration efficacy; and decontamination that will allow reuse of masks, specifically N95s and surgical masks.

To paraphrase Quiz Show, I’ll take the third part first. This section is about the reuse of proper masks in a medical setting. An N95, properly fitted to OSHA standards, takes about ten minutes to fit. You’re putting on the respirator, putting a hood over that, and spraying a solution inside of the hood to check if the spray is penetrating the mask. There’s all sorts of guidelines about what to do after you take it off to keep from contaminating yourself.

In my research, I wasn’t able to find a single instance of anyone from the CDC or any public health agency saying “get an N95, have it fit-tested, and throw it away after X hours worth of use”. If they recommend an N95, it’s in the context of it being the best protection, but nothing about how to ensure its fit, its time-limited efficacy, how it needs to be cleaned in between uses to maintain its integrity, or that men with facial hair can’t wear them because any gap along the edges will ruin its effectiveness. More on this in a minute.

Now the first part. This is a review of the epidemiological evidence around masks. I’ve written about the problems with epidemiology before, but remember that all of this is evidence of correlation, not causation. There’s a lot of studies they talk about, but there’s been a problem with cherry-picking what to represent and ignoring the evidence that doesn’t, or misrepresenting what does get cited. For example,

In Thailand, a retrospective case-control study examined 1050 asymptomatic contacts of COVID-19 positive individuals; 211 of the contacts later tested positive, and 839 of them never tested positive [36]. It was found that compliance with mask-wearing during contact with the COVID-19 positive individuals was strongly associated with a > 70% reduced risk of infection for those who always wore a mask, compared to those who never wore one [36].

Again, this is correlation, not causation. Could the people who always wore a mask be more careful in general, avoiding contact, avoiding crowds, fastidiously washing their hands? We’ll never know. All we know is what they tell us.

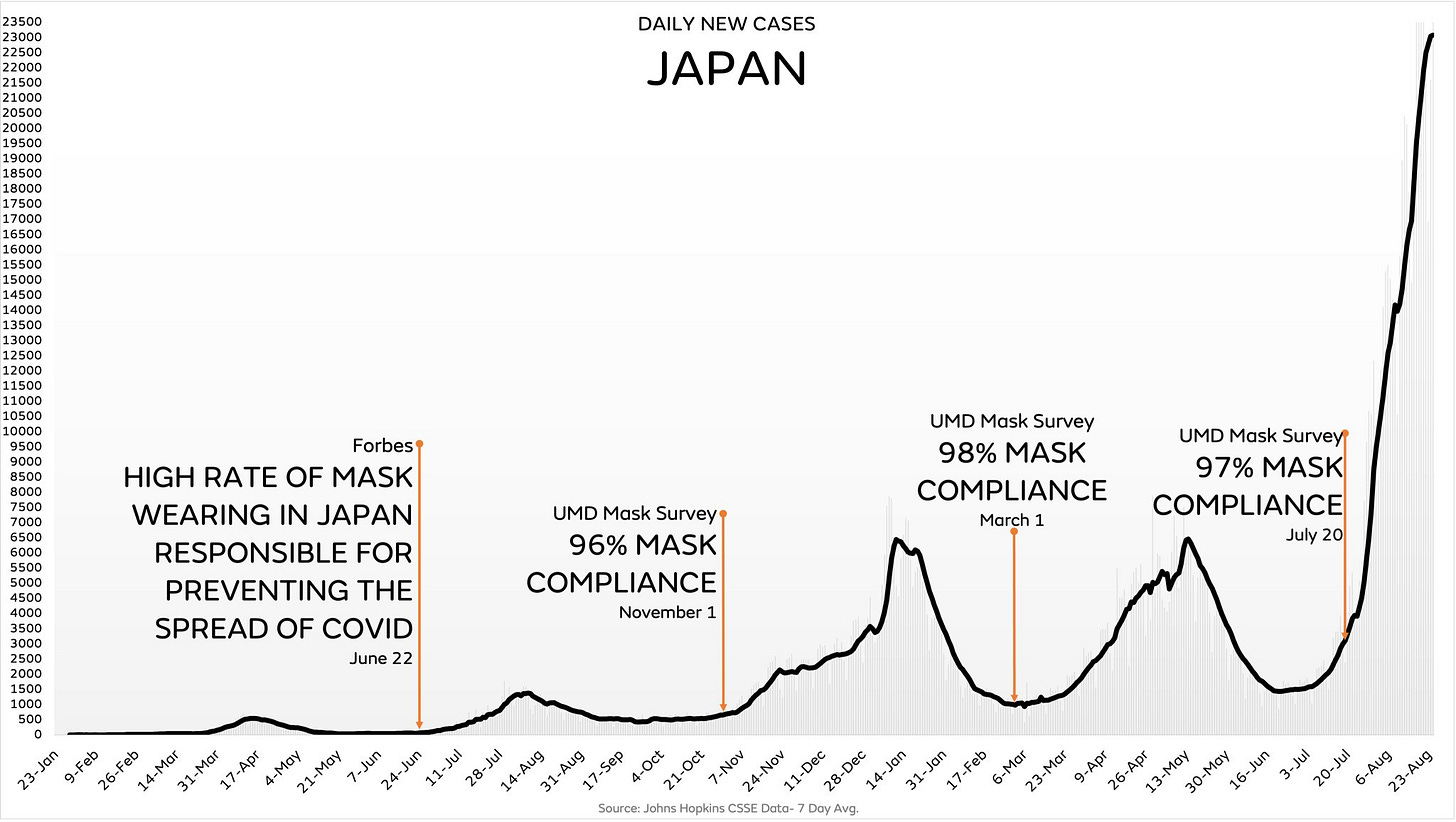

It cites this study from Goldman Sachs that says 80% of Massachusetts self-reported always wearing a mask vs. 40% in Arizona. That could just as easily tell you that Massachusetts residents are more concerned with the virtue signaling of being perceived as on the “right” side of the issue, rather than what they actually do. But more importantly, this Goldman Sachs study is subject to the same errors described in my previous post. It reports 90% mask wearing in East Asia, but it was published in June 2020 and every one of their vaunted East Asian countries exploded with cases, despite ongoing mask mandates, in 2021.

Let’s talk about a guy named Sir Austin Bradford Hill for a second. He was a 20th century epidemiologist and pioneered the design of the randomized controlled trial. He created nine criteria for when an epidemiological observation can be inferred to be causative, all of which must be passed. For example, it was observed that chimney sweeps got scrotal cancer at 200 times the rate of other occupations, so you can reasonably infer causality.

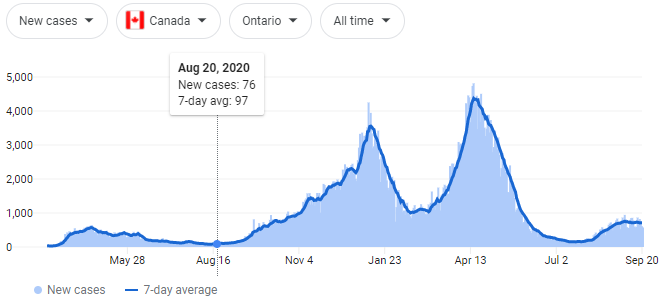

The Ju review cites a study from August 2020 in Canada where a mask mandate was issued in Ontario, and within the first few weeks, the average weekly number of new infections decreased 25-31%. A nice number? Sure. High enough to be causative? Not a chance. Seen in other areas that passed mask mandates? Nope. Did the cases stay low, even though the mask mandates were never rescinded? Hah.

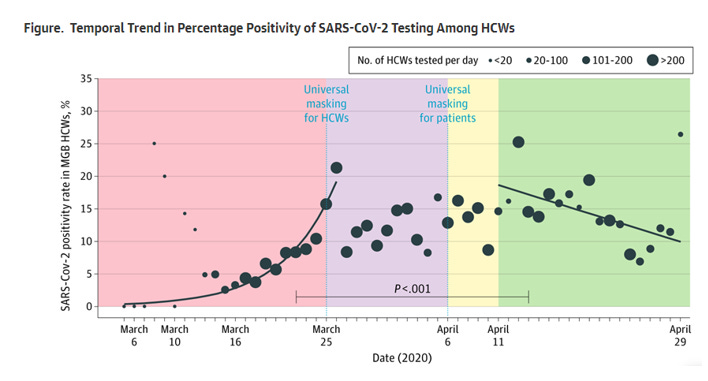

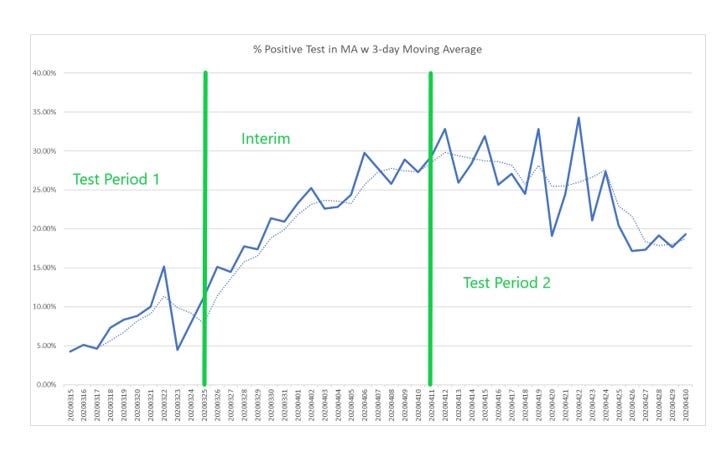

It mentions the Mass General Brigham case study! I just learned about this one this week, which is why I’m excited. It says that after a mask mandate was passed for patients and staff in this hospital system, cases went down 15-20%. But this is something I always have to ask in my data analysis job: what is your baseline? So let’s see the case graph from the study:

Compared to the case graph for the state during the same time period:

It’s the same curve! Cases went down at the hospital because they went down everywhere!

The last paragraph of this section states, “While the methods and results presented here do vary, all available epidemiologic evidence suggests that community-wide mask-wearing results in reduced rates of COVID-19 infections.” Again, epidemiological studies can only inform on association. It doesn’t matter if you have 1, 10, 100, or 1000 studies. Zero plus zero plus zero plus zero equals… zero.

“It is predicted that community-wide mask-wearing is able to reduce Rt to below 1.”

Lastly, part two of the Ju study has eight pages dealing with the standards, testing methods, and efficacy of masks. This part gets super-technical, and is a really interesting read if you want to get deep into this.

On page 6, there’s a paragraph about quantitative fit tests, using a machine to check how well a mask is sealing around the edges. A 200 is a perfect score, a score over 100 is considered a pass. It states,

Using the QNFT, it was found that 100% of surgical masks tested failed under normal breathing conditions… [and] had a fit factor in the range of only 2.5-9.6, depending on the brand of the mask and individual face shape.

What the report doesn’t then state is, “Therefore, any discussion of the filtration of the mask is moot, because it’s all going around the edges.” All of the discussion about how well different fabrics are at filtering out different size particles or at different pressures is meaningless, because unless the mask fits, everything leaks out. Indeed, on page 11 it states that leaks “may explain why there seemed to be no significant differences between N95 and surgical masks in healthcare workers, as strict compliance with PPE all the time is very unlikely.”

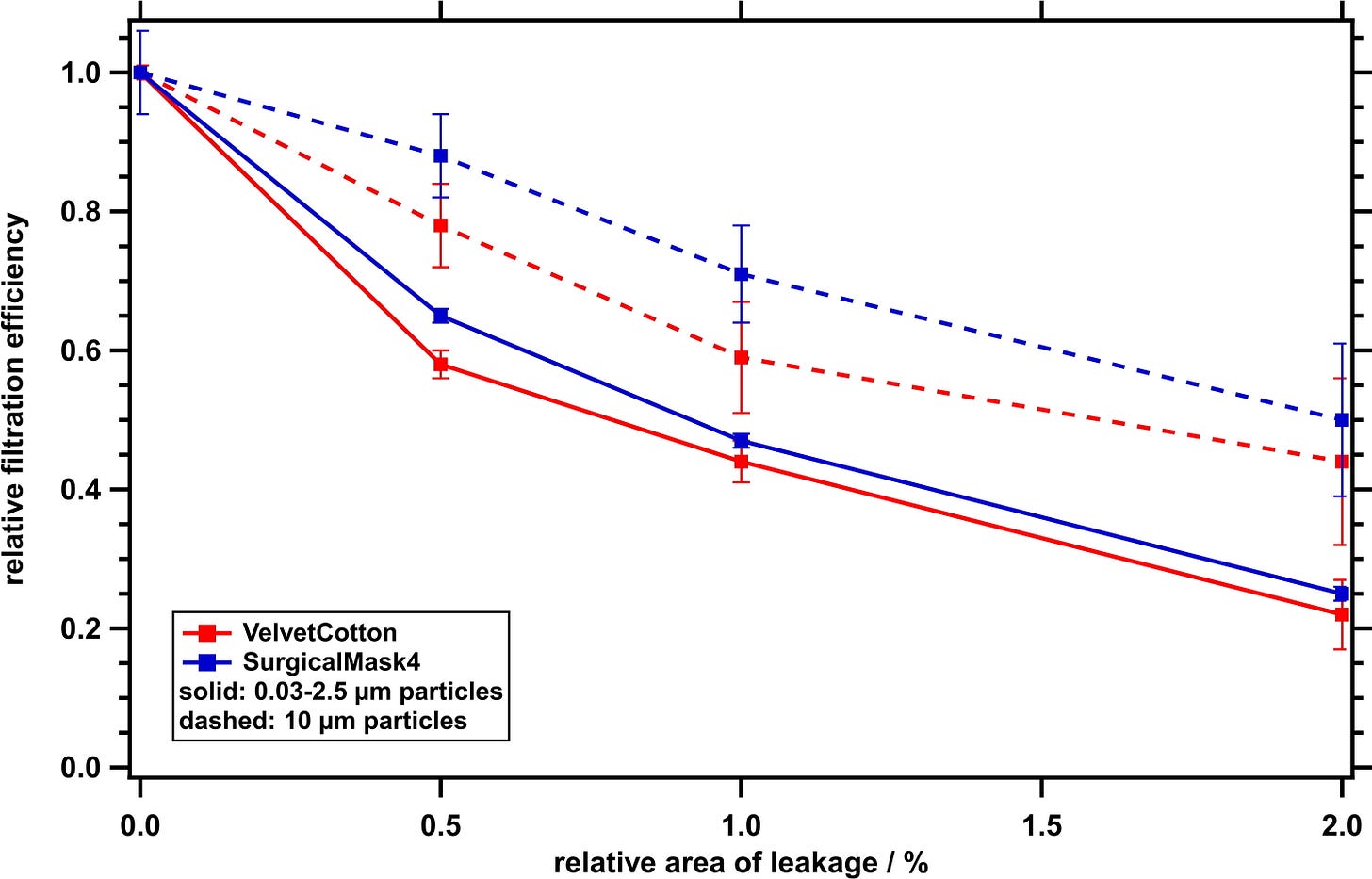

Let’s look at this graph real quick, from a study published in Aerosol Science and Technology by Drewnick et al.:

What this shows is if you have a .5% relative area of leakage in your mask, the efficacy drops 70% for a surgical mask and 60% for a cloth mask. If you have a 1% relative area of leakage, it drops to 50% for both masks. So unless you’re duct-taping your mask around your nose and mouth, the gaps around the edges are ruining its effectiveness.

Back to the Ju study, there’s a section talking about filtration efficacy of different materials, all tested under laboratory conditions with no leakage around the sides. Again, as per above, this doesn’t mean anything in the real world. But let’s talk really quick about the other effect of these masks.

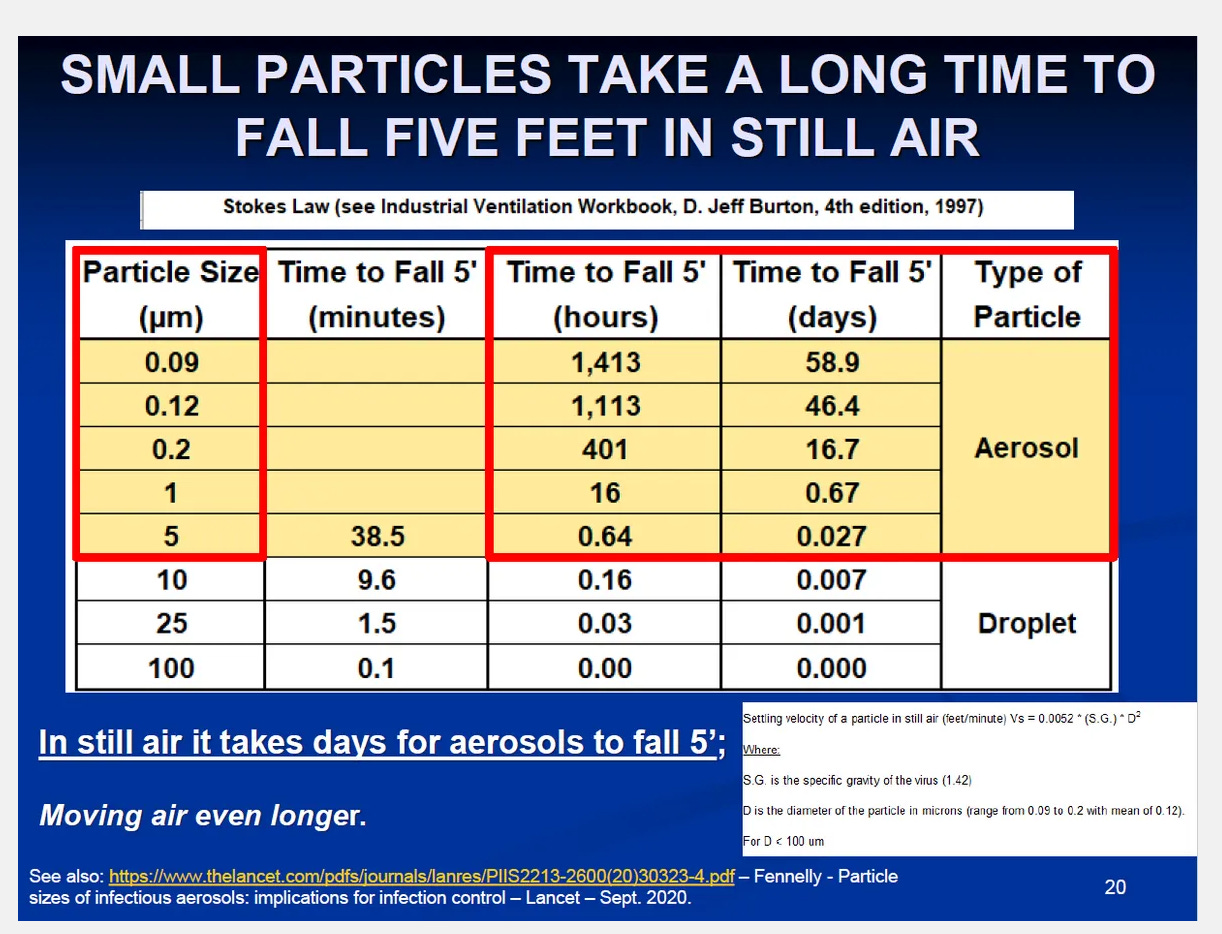

Something I don’t see in this article is the nebulizing effect of masks. When a droplet hits a porous media under pressure, it’s like throwing a water balloon at a chain-link fence. It doesn’t capture the droplet, it blows it apart into much smaller aerosols which are then cast off into the atmosphere. And aerosolized particles do… weird things.

A COVID viron is about 0.3 microns. So when cast off from an infected person in aerosol form, they’re in the air for about two weeks. What you’re doing by wearing a mask is potentially breaking apart those larger droplets into smaller aerosols, forcing them to stay in the air longer than if you’d been wearing nothing.

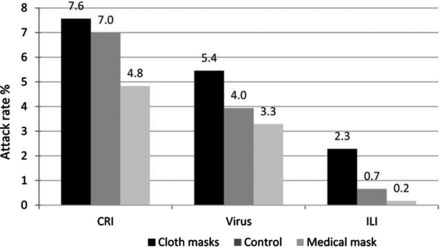

Then the Ju study cites one of my favorite references. In BMJ April 2015, MacIntyre et al. published “A cluster randomized trial of cloth masks compared with medical masks in healthcare workers”. This was a study conducted in medical wards in Vietnam, one of the mask-favoring countries cited in Leffler 2020. They randomized into three groups: medical masks, cloth masks, or control group where they could choose for themselves. (The IRB said it would be unethical to disallow them to wear one.) They had the participants wear the masks for four weeks, then measured the prevalence in each group afterwards of clinical respiratory illness, influenza-like illness, or viral respiratory infection.

My favorite tidbit: men with beards, mustaches, or long stubble were disallowed from the study because the gaps would create too much of a confounder.

Now, look at what they observed in the three groups:

The people with cloth masks got sick more often than those who didn’t wear masks at all.

So my summary of Ju 2021:

Epidemiological evidence shows masks work, as long as you ignore that correlation doesn’t equal causation and ignore anything that happened after the cited studies were published.

Testing efficacy shows that masks work, except for the part about where the gaps make them totally ineffective.

There’s a bunch of different ways to clean masks effectively, which no one outside of a medical setting is actually doing.

Other than that, totally convincing.

What are my takeaways from all of this?

“Follow the Science”, if it meant anything before, means less to me now. I’ve tended to be skeptical about what I hear from the media before, but after reading these three studies, I truly won’t believe in any study until I sit down, read through the protocols, look at the data as cleanly as I can, and make my own decision as to how good a study it is.

The CDC was correct in their policy published in May 2020 that masks don’t work to control influenza spread. Early on when people hypothesized that COVID spread via droplets, I can understand guidance suggesting masks work. But as soon as the evidence showed that it’s spread via aerosols, scientists and public health officials should have come forward and changed that guidance.

Groupthink, or as Gary Taubes calls it “intellectual phaselock”, is driving the bus in science. Once a memetic idea takes hold, that’s where the grant money goes, those who try to disprove the hypothesis are ostracized, and everything keeps spiraling out of control. Science, properly conducted, is about challenging assumptions, about taking a hypothesis and designing studies to disprove it. That’s not how these studies are done.

Let me be clear. I can make an argument for very tightly controlled scenarios where I think a mask may make a difference. A person wearing a properly-fitted mask in an infectious space may have a few more minutes of “safety” than one without.

But I have yet to see any evidence that population-wide recommendations or mandates make any difference whatsoever, and I have yet to see any real-world mechanistic explanations that are convincing.

Brilliant. I wish everyone in the world read your reports. Thank you Joshua!